Illusion Of Understanding — Know What You Don't Know

How to avoid one of the biggest pitfall in decision making — the illusion of understanding.

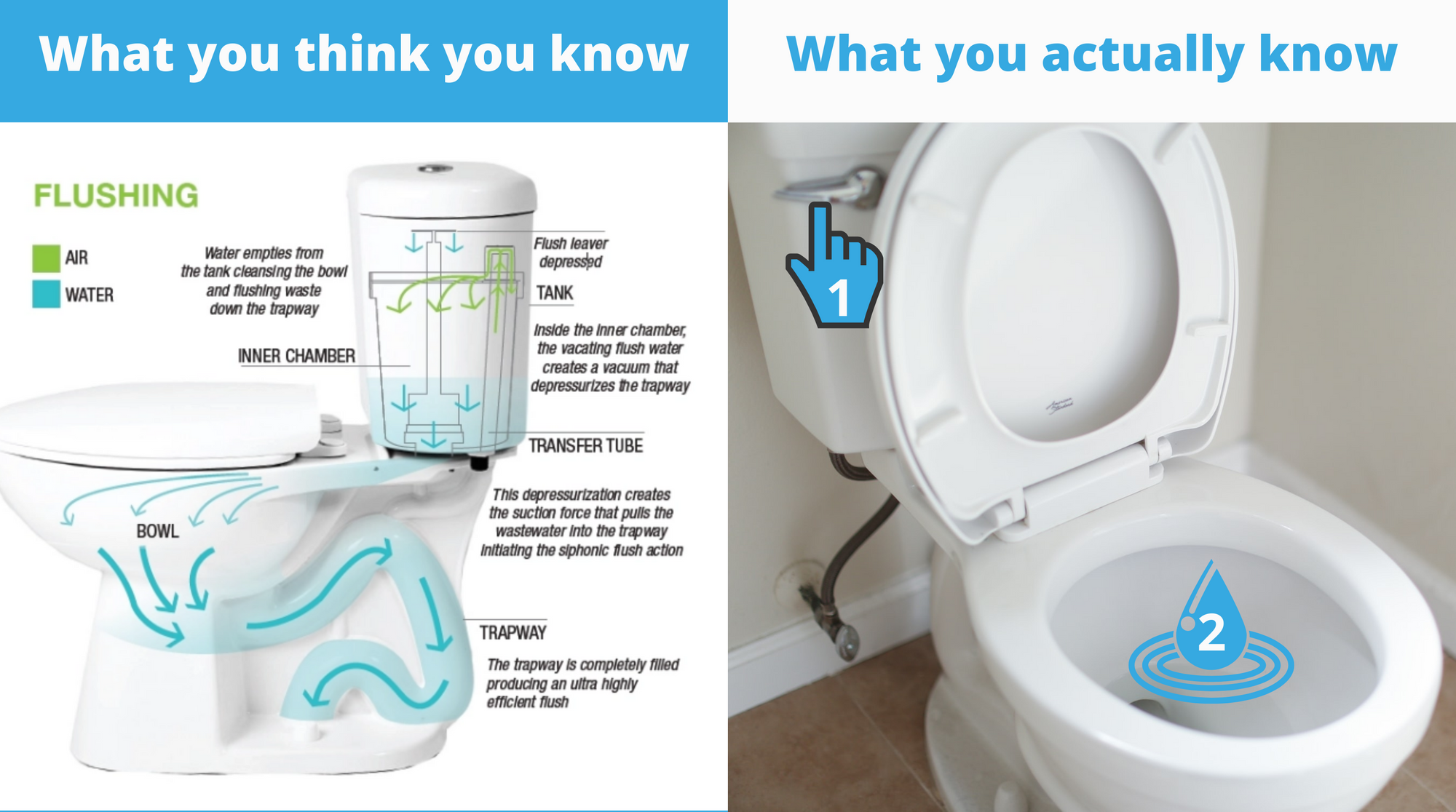

Previously, I wrote about embracing uncertainty to make better decisions. In this post, I'll be writing about how to avoid one of the biggest pitfalls in decision making — the illusion of understanding. Knowledge (things you know) and ignorance (stuff you don't understand) contribute to your belief (what you think is true). Ignorance or the lack of information makes betting (decision making) difficult. But assuming you know when you don't know can be even more dangerous. Have you ever met someone who defended their position on an issue but couldn't explain why in detail? Stick around — this could be you.

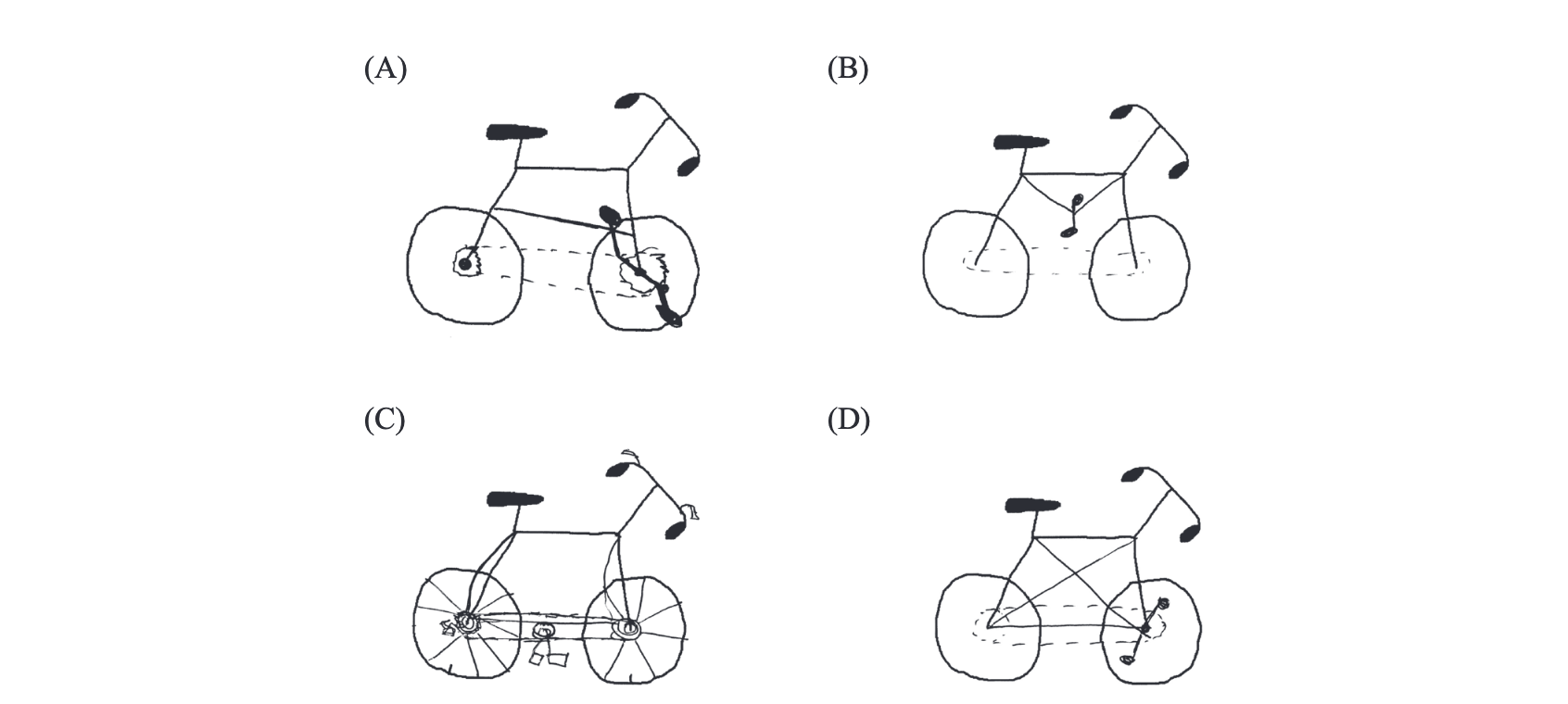

Let's make this interactive. Without scrolling further, grab a piece of paper and draw a bicycle. Use the image above as a starting point. Add the frame, pedals, and chain. You get a pass if you don't know what a bicycle looks like or how they work, but I doubt anyone reading this falls into that category. If you think you know how bikes work, try to complete the challenge.

Easy right? Now bring your bicycle to life. Is it functional? The test is from a 2006 study [1]. They found that over 40% of participants drew bikes that wouldn't work in real life. These are objects most of us feel knowledgeable about. But how come we don't know how they work? This is the illusion of understanding. We think we understand things well till we have to explain them in detail.

Buy This Stock

Being a "finance guy," I'm recommended stocks to buy all the time. Mostly I listen then ignore the recommendation. But sometimes I ask why I should buy it. I usually get the same ABC is about to double because of this, this, and that. There is typically a level of certainty around the pitch — these individuals do a lot of research and have modeled ABC's success in spreadsheets. But as we get into a conversation, they figure out that there's a flaw somewhere in their belief. I wish I were impressive, but I'm not. I'm usually just standing there, letting them think out loud. But only then do they realize that they haven't thought it through.

Before I got into Decision Making (the field), I always wondered how people could go from being sure (they've usually placed their bet already) to being uncertain in a few minutes. In cases like these, it's because they learned that they didn't know as much as they thought they did. If you've ever been here, it's a good thing. Updating your beliefs after getting new information is crucial to making good decisions. What's worse is ignoring further information because it contradicts your beliefs. I'll write more about this in a future post.

A good way to avoid this pitfall is to talk to people about the decision. Write it down first, have a conversation with other people about it, then go back and read what you initially wrote. In the case of a stock, looking at the bear case as a bull is valuable. At work, asking someone else to review your work can make you better at your job. Software Engineers are familiar with this process. The worst-case scenario is that they completely agree with you. It's the worst-case because you've learned nothing new that could impact your belief. The best thing that could happen is if they provide new information that introduces things you previously didn't consider. This new information can either support or go against your belief. It can be difficult because we humans seek validation and recognition from our peers. Having someone tell you that something won't work is not great, but finding out after the fact is even worse.

Narrative Fallacy

Why did the stock market go up today? Why is it down? Why is it that the same event can be used to explain both? Take the coronavirus, for example. On a red day, headlines can say: "Stocks dip as investors worry about the rising cases of the coronavirus." It makes sense so far. If the same day is green, those same headlines could read: "Stocks jump as investors shrug off rising cases of the coronavirus." This makes no sense. If you sit and think about it, it shouldn't make sense. But our brains prefer the easy-to-understand and familiar explanation when trying to make sense of something complicated.

In his book, Thinking, Fast and Slow, Nobel Laureate Daniel Kahneman calls this associative coherence. The fast-thinking (System 1) part of our brain will substitute a difficult question with something simpler and related. It likes to keep a familiar narrative (a story). Even worse, that same part of our brain prefers these substitute conclusions whenever presented to us. The headline writers could be falling for System 1 thinking, or maybe they know that their audience will fall for it (ad revenue). It could be both — it could be a self-reinforcing loop where the writers believe what they are writing because their audience never gets tired of it.

We tend to think like those headline writers. We make sense of events by substituting them with familiar stories. It can lead us to believe that we understand the past more than we actually do. We see things that happened in the past and expect the future to be similar. But what about all the events that could have likely happened but failed to come to fruition? What about chance? Ignoring nonevents (alternate histories) lead us to believe the future will look a lot like the past because that past is all we know. But that past is just one of many.

If you're starting to feel like reverse Dr. Strange, well, you should. Strange reviewed all 14,000,605 futures when trying to defeat Thanos. It's funny he's played by Benedict Cumberbatch, who also depicts Sherlock Holmes — a pretty good decision-maker.

Delve Deeper

Embrace Uncertainty

Books

- If you've never read Thinking, Fast and Slow, you should pick up a copy. Being aware of your cognitive biases will make you a better decision-maker.

- If you want to understand why stories are so important to us humans, read Sapiens: A Brief History of Humankind by historian Yuval Noah Harari.

- Mastermind: How to Think Like Sherlock Holmes by Maria Konnikova, a professional poker player, is also a good read.

Sources

[1] - https://www.liverpool.ac.uk/~rlawson/PDF_Files/L-M&C-2006.pdf

Did you draw the bike? Could you share it with me? It can't be worse than what the participants in the study came up with.